What is Robots txt WordPress?

Robots.txt is a text file which allows a website to provide instructions to web crawling bots. Search engines like Google use these web crawlers, sometimes called web robots, to archive and categorize websites. Mosts bots are configured to search for a robots.txt file on the server before it reads any other file from the website. It does this to see if a website’s owner has some special instructions on how to crawl and index their site.

Why Should Use robots. txt

Having a robots.txt file isn’t crucial for a lot of websites, especially small ones. That said, there’s no good reason not to have one. It gives you more control over where search engines can and can’t go on your website, and that can help with things like:

- Preventing the crawling of duplicate content;

- Keeping sections of a website private (e.g., your staging site);

- Preventing the crawling of internal search results pages;

- Preventing server overload;

- Preventing Google from wasting “crawl budget.”

- Preventing images, videos, and resources files from appearing in Google search results.

Note that while Google doesn’t typically index web pages that are blocked in robots.txt, there’s no way to guarantee exclusion from search results using the robots.txt file. Like Google says, if content is linked to from other places on the web, it may still appear in Google search results.

How to Create or edit robots. txt in the WordPress Dashboard

FTP upload

Just create Create Robots.txt on the computer, then upload it to root folder of your website.

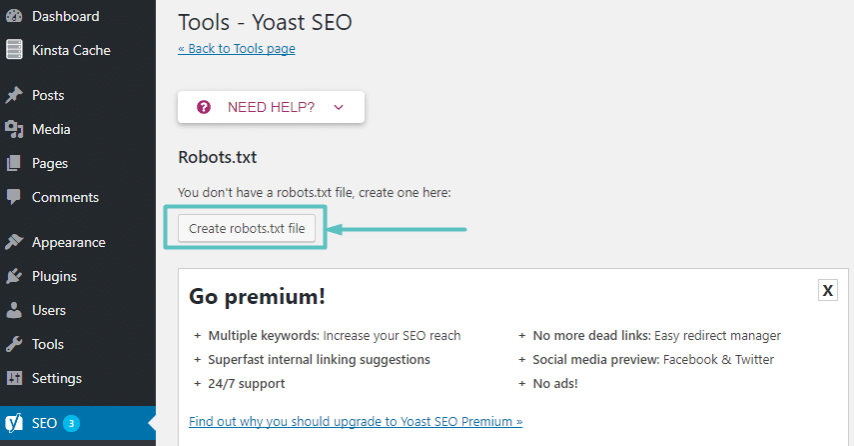

with Yoast SEO plugin

- Log in to your WordPress website. When you’re logged in, you will be in your ‘Dashboard’.

- Click on ‘SEO’. On the left-hand side, you will see a menu. …

- Click on ‘Tools’. …

- Click on ‘File Editor’. …

- Make the changes to your file.

- Save your changes.

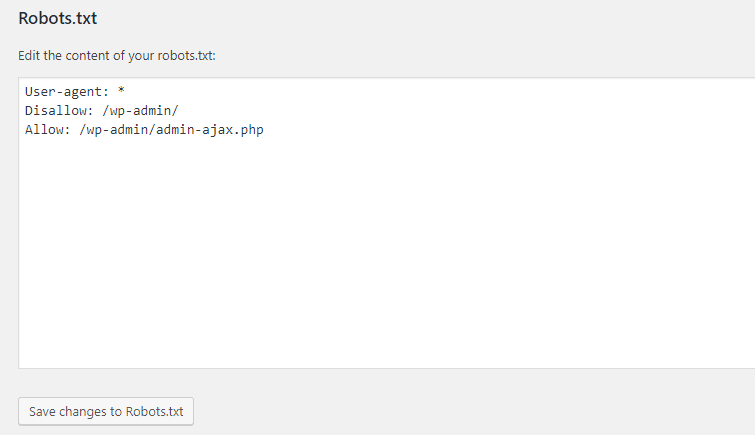

Best Robots.txt for WordPress SEO

Here is the best-practice Robots.txt example for wordpress

User-agent: *

Disallow: /cgi-bin/

Disallow: /wp-admin/

Disallow: /wp-includes/

Disallow: /wp-content/plugins/

Disallow: /wp-content/cache/

Disallow: /wp-content/themes/

Disallow: /author/

Disallow: /trackback/

Disallow: /feed/

Disallow: /comments/

Disallow: */trackback/

Disallow: */feed/

Disallow: */comments/Disallow means DON’T ALLOW CRAWLING and it won’t be indexed on Google or other search engines.

Final Thoughts

The goal of optimizing your robots.txt file is to prevent search engines from crawling pages that are not publicly available. A common myth among SEO experts is that blocking WordPress category, tags, and archive pages will improve crawl rate and result in faster indexing and higher rankings.

We hope this article helped you learn how to optimize your WordPress robots.txt file for SEO. You may also want to see our ultimate WordPress SEO guide and the best WordPress SEO tools to grow your website.